1.3.2 - 10 May 2023

- Use default initialiser if not using a custom env by @adriangonz in SeldonIO#1104

- Add support for online drift detectors by @ascillitoe in SeldonIO#1108

- added intera and inter op parallelism parameters to the hugggingface … by @saeid93 in SeldonIO#1081

- Fix settings reference in runtime docs by @adriangonz in SeldonIO#1109

- Bump Alibi libs requirements by @adriangonz in SeldonIO#1121

- Add default LD_LIBRARY_PATH env var by @adriangonz in SeldonIO#1120

- Ignore both .metrics and .envs folders by @adriangonz in SeldonIO#1132

- @ascillitoe made their first contribution in SeldonIO#1108

Full Changelog: https://github.com/SeldonIO/MLServer/compare/1.3.1...1.3.2

1.3.1 - 27 Apr 2023

- Move OpenAPI schemas into Python package (#1095)

1.3.0 - 27 Apr 2023

WARNING

⚠️ : The1.3.0has been yanked from PyPi due to a packaging issue. This should have been now resolved in>= 1.3.1.

More often that not, your custom runtimes will depend on external 3rd party dependencies which are not included within the main MLServer package - or different versions of the same package (e.g. scikit-learn==1.1.0 vs scikit-learn==1.2.0). In these cases, to load your custom runtime, MLServer will need access to these dependencies.

In MLServer 1.3.0, it is now possible to load this custom set of dependencies by providing them, through an environment tarball, whose path can be specified within your model-settings.json file. This custom environment will get provisioned on the fly after loading a model - alongside the default environment and any other custom environments.

Under the hood, each of these environments will run their own separate pool of workers.

The MLServer framework now includes a simple interface that allows you to register and keep track of any custom metrics:

[mlserver.register()](https://mlserver.readthedocs.io/en/latest/reference/api/metrics.html#mlserver.register): Register a new metric.[mlserver.log()](https://mlserver.readthedocs.io/en/latest/reference/api/metrics.html#mlserver.log): Log a new set of metric / value pairs.

Custom metrics will generally be registered in the [load()](https://mlserver.readthedocs.io/en/latest/reference/api/model.html#mlserver.MLModel.load) method and then used in the [predict()](https://mlserver.readthedocs.io/en/latest/reference/api/model.html#mlserver.MLModel.predict) method of your custom runtime. These metrics can then be polled and queried via Prometheus.

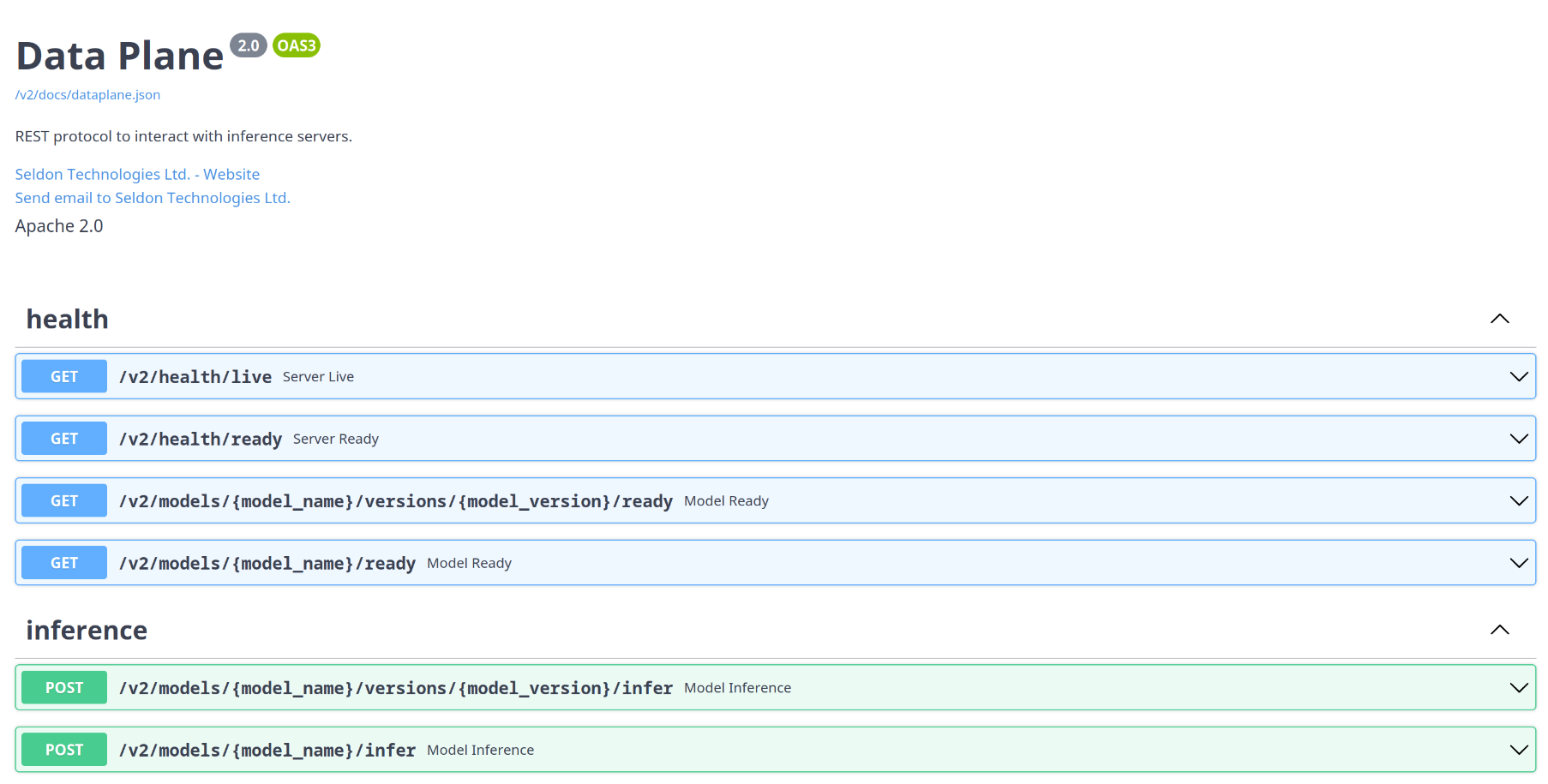

MLServer 1.3.0 now includes an autogenerated Swagger UI which can be used to interact dynamically with the Open Inference Protocol.

The autogenerated Swagger UI can be accessed under the /v2/docs endpoint.

Alongside the general API documentation, MLServer also exposes now a set of API docs tailored to individual models, showing the specific endpoints available for each one.

The model-specific autogenerated Swagger UI can be accessed under the following endpoints:

/v2/models/{model_name}/docs/v2/models/{model_name}/versions/{model_version}/docs

MLServer now includes improved Codec support for all the main different types that can be returned by HugginFace models - ensuring that the values returned via the Open Inference Protocol are more semantic and meaningful.

Massive thanks to @pepesi for taking the lead on improving the HuggingFace runtime!

Internally, MLServer leverages a Model Repository implementation which is used to discover and find different models (and their versions) available to load. The latest version of MLServer will now allow you to swap this for your own model repository implementation - letting you integrate against your own model repository workflows.

This is exposed via the model_repository_implementation flag of your settings.json configuration file.

Thanks to @jgallardorama (aka @jgallardorama-itx ) for his effort contributing this feature!

MLServer 1.3.0 introduces a new set of metrics to increase visibility around two of its internal queues:

- Adaptive batching queue: used to accumulate request batches on the fly.

- Parallel inference queue: used to send over requests to the inference worker pool.

Many thanks to @alvarorsant for taking the time to implement this highly requested feature!

The latest version of MLServer includes a few optimisations around image size, which help reduce the size of the official set of images by more than ~60% - making them more convenient to use and integrate within your workloads. In the case of the full seldonio/mlserver:1.3.0 image (including all runtimes and dependencies), this means going from 10GB down to ~3GB.

Alongside its built-in inference runtimes, MLServer also exposes a Python framework that you can use to extend MLServer and write your own codecs and inference runtimes. The MLServer official docs now include a reference page documenting the main components of this framework in more detail.

- @rio made their first contribution in SeldonIO#864

- @pepesi made their first contribution in SeldonIO#692

- @jgallardorama made their first contribution in SeldonIO#849

- @alvarorsant made their first contribution in SeldonIO#860

- @gawsoftpl made their first contribution in SeldonIO#950

- @stephen37 made their first contribution in SeldonIO#1033

- @sauerburger made their first contribution in SeldonIO#1064

1.2.4 - 10 Mar 2023

Full Changelog: https://github.com/SeldonIO/MLServer/compare/1.2.3...1.2.4

1.2.3 - 16 Jan 2023

Full Changelog: https://github.com/SeldonIO/MLServer/compare/1.2.2...1.2.3

1.2.2 - 16 Jan 2023

Full Changelog: https://github.com/SeldonIO/MLServer/compare/1.2.1...1.2.2

1.2.1 - 19 Dec 2022

Full Changelog: https://github.com/SeldonIO/MLServer/compare/1.2.0...1.2.1

1.2.0 - 25 Nov 2022

MLServer now exposes an alternative “simplified” interface which can be used to write custom runtimes. This interface can be enabled by decorating your predict() method with the mlserver.codecs.decode_args decorator, and it lets you specify in the method signature both how you want your request payload to be decoded and how to encode the response back.

Based on the information provided in the method signature, MLServer will automatically decode the request payload into the different inputs specified as keyword arguments. Under the hood, this is implemented through MLServer’s codecs and content types system.

from mlserver import MLModel

from mlserver.codecs import decode_args

class MyCustomRuntime(MLModel):

async def load(self) -> bool:

# TODO: Replace for custom logic to load a model artifact

self._model = load_my_custom_model()

self.ready = True

return self.ready

@decode_args

async def predict(self, questions: List[str], context: List[str]) -> np.ndarray:

# TODO: Replace for custom logic to run inference

return self._model.predict(questions, context)To make it easier to write your own custom runtimes, MLServer now ships with a mlserver init command that will generate a templated project. This project will include a skeleton with folders, unit tests, Dockerfiles, etc. for you to fill.

MLServer now lets you load custom runtimes dynamically into a running instance of MLServer. Once you have your custom runtime ready, all you need to do is to move it to your model folder, next to your model-settings.json configuration file.

For example, if we assume a flat model repository where each folder represents a model, you would end up with a folder structure like the one below:

.

├── models

│ └── sum-model

│ ├── model-settings.json

│ ├── models.py

This release of MLServer introduces a new mlserver infer command, which will let you run inference over a large batch of input data on the client side. Under the hood, this command will stream a large set of inference requests from specified input file, arrange them in microbatches, orchestrate the request / response lifecycle, and will finally write back the obtained responses into output file.

The 1.2.0 release of MLServer, includes a number of fixes around the parallel inference pool focused on improving the architecture to optimise memory usage and reduce latency. These changes include (but are not limited to):

- The main MLServer process won’t load an extra replica of the model anymore. Instead, all computing will occur on the parallel inference pool.

- The worker pool will now ensure that all requests are executed on each worker’s AsyncIO loop, thus optimising compute time vs IO time.

- Several improvements around logging from the inference workers.

MLServer has now dropped support for Python 3.7. Going forward, only 3.8, 3.9 and 3.10 will be supported (with 3.8 being used in our official set of images).

The official set of MLServer images has now moved to use UBI 9 as a base image. This ensures support to run MLServer in OpenShift clusters, as well as a well-maintained baseline for our images.

In line with MLServer’s close relationship with the MLflow team, this release of MLServer introduces support for the recently released MLflow 2.0. This introduces changes to the drop-in MLflow “scoring protocol” support, in the MLflow runtime for MLServer, to ensure it’s aligned with MLflow 2.0.

MLServer is also shipped as a dependency of MLflow, therefore you can try it out today by installing MLflow as:

$ pip install mlflow[extras]To learn more about how to use MLServer directly from the MLflow CLI, check out the MLflow docs.

- @johnpaulett made their first contribution in SeldonIO#633

- @saeid93 made their first contribution in SeldonIO#711

- @RafalSkolasinski made their first contribution in SeldonIO#720

- @dumaas made their first contribution in SeldonIO#742

- @Salehbigdeli made their first contribution in SeldonIO#776

- @regen100 made their first contribution in SeldonIO#839

Full Changelog: https://github.com/SeldonIO/MLServer/compare/1.1.0...1.2.0